If you are a legacy software security vendor, you should probably be a little scared - Anthropic’s Claude Code Security announcement literally wiped billions in market cap off the cybersecurity sector overnight (albeit temporarily). If you are an attacker who relies on "forgotten" business logic flaws to gain access, you should also be looking for a new hobby. And if you’re a developer already neck-deep in AI tools, you can genuinely be excited as the dream of a "self-healing" code pipeline just got (a little) closer to reality. For everyone else though - I think it's safe to stay calm. While Claude Code Security is an impressive leap forward, it isn't going to rewrite the laws of physics (or security) tomorrow. It’s a powerful new tool in a rapidly evolving toolbelt, but like any tool, its impact depends entirely on how you use it.

In our foundational post, we broke down the intersection of AI and security into four key pillars. To understand where Anthropic’s new capability sits, we have to look at those categories:

Claude Code Security fits squarely into Category 3: Empowerment of Security. It is a defensive capability designed to help human researchers find the needles in the ever-growing haystack of enterprise code.

The capabilities Anthropic is touting are a significant departure from traditional Static Analysis (SAST). Most legacy tools are essentially high-powered RegEx machines - they look for specific patterns that match known vulnerabilities.

Claude Code Security, however, treats code like a story. It reads and reasons about the application, understanding how components interact and tracing data flows. This allows it to:

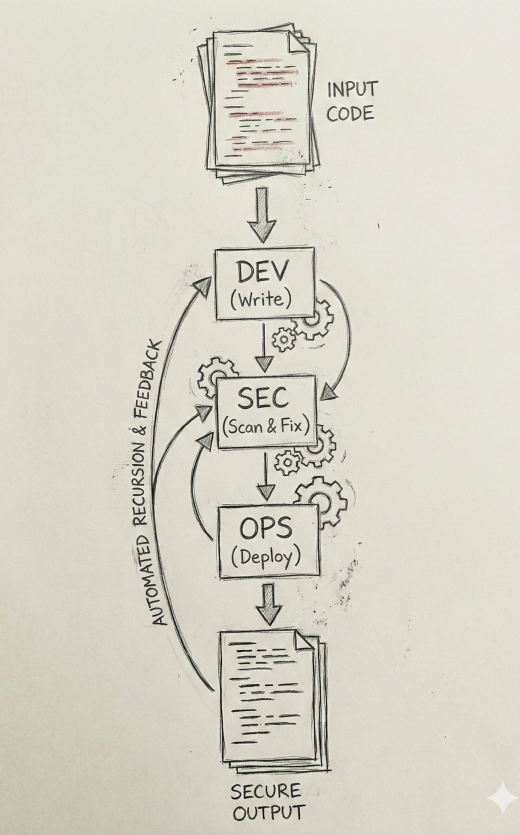

One of the ways this can really move us forward as an industry is by using our DevSecOps pipelines not just to test code for issues, but to also remediate issues and recursively improve the output from the process. This both improves security outcomes and speeds up the development process.

As impressive as finding 500+ zero-days in open-source code is, we have to be realistic on the impact. Back in July of 2025 when XBOW was sitting atop the HackerOne bug bounty leaderboards, CyberScoop wrote a piece: Is XBOW’s success the beginning of the end of human-led bug hunting? Not yet. I think we should remember some quotes that were true then are are still true now:

This is not to say what these tools are solving for is unimportant, they absolutely provide value, and will continue to get better over time. But they aren't complete yet.

So the first thing to remember is that people are still "beating" these AI tools in many ways. While AI excels in predictable or clearly defined tasks, humans are still better when it comes to complex tasks and working under unclear constraints and instructions. And we all still find ourselves beating our heads against a wall to hunt down an intuition or logic our way down a maze to find an unexpected result at the end.

Second, real-time protection is still a separate battle. Claude Code Security is a "build-time" tool: it makes your code safer before it ships, but it doesn't stop an attacker from using prompt injection or session hijacking on a running application. We still need the systemic protections of Category 2 to monitor live AI agents.

And third, the human element of generative AI remains the weakest link, and the least protected. Even with a perfectly secure codebase, AI-powered social engineering is a massive risk. An attacker doesn't need to exploit a buffer overflow if they can use a perfect AI-generated voice clone to trick an admin into handing over their credentials.

So Claude Code Security is a fantastic addition to the defender’s arsenal. It helps us clear out the "vulnerability debt" that has plagued software for decades, allowing security teams to focus on the higher-level architectural and social challenges. It's another tool in the belt - albeit a very shiny, very smart one - but the belt still needs a human to wear it.

As always, we look forward to keeping in touch, so don’t hesitate to reach out to us at questions@generativesecurity.ai if you want to discuss how to integrate AI into your security tooling, or to better secure the generative AI you're putting in front of your customers today.

About the author

Michael Wasielewski is the founder and lead of Generative Security. With 20+ years of experience in networking, security, cloud, and enterprise architecture Michael brings a unique perspective to new technologies. Working on generative AI security for the past 3 years, Michael connects the dots between the organizational, the technical, and the business impacts of generative AI security. Michael looks forward to spending more time golfing, swimming in the ocean, and skydiving... someday.