Recently I’ve been spending a lot of time in conversations with just about everyone in the IT ecosystem - partner conversations with Cloud Service Providers (CSPs), demos with technology vendors, customer briefings with enterprise leaders, investor conversations on the direction of AI security, etc... The conversations we're having, once you get past all the technology, bring up a much bigger anxiety. The question isn’t just "How do we secure this?" but rather: "Who is responsible for security, and what does that responsibility actually mean?"

We are currently witnessing a massive land grab with the "security" label. Google and Wiz are announcing deeply embedded features that promise a "secure by default" platform. Model providers like Anthropic are baking advanced guardrails directly into the weights of their frontier models. Meanwhile, investors are looking at the landscape, trying to determine if the solutions will be delivered by the CSPs, or if there is a permanent gap for third-party innovators to fill.

Everywhere you look, a different vendor is raising their hand to claim they have the solution. But in these conversations, customers are asking the one thing the marketing decks won’t address: When a model hallucinates a breach or an agent exceeds its authority, who is left holding the bag? If you’ve been in the cloud game long enough, you remember the early days of the Shared Responsibility Model. It was a clean line: the provider secures the "of" the cloud (hardware, cables, hypervisors), and you secure the "in" the cloud (data, OS, apps). But in the age of agentic AI, with models discovering zero-days via Anthropic’s Claude Mythos, and agents are autonomously executing code (or deleting entire databases), that clean line has become a blurry mess.

To understand where we are, we have to look at the layers being built right now:

The industry loves to talk about "responsibility" because responsibility is a contract. It’s an SLA. It’s something legal can point to. When I was at AWS we would downplay SLA's because they promised you a refund on the bill based on availability, with no real compensation for the business impact that loss of availability had. Because the business doesn't live or die based on who has responsibility; the business lives or dies based on the outcomes.

The CSA’s recent publication, The “AI Vulnerability Storm”: Building a “Mythos-ready” Security Program, makes a chilling point: the time between vulnerability detection and exploitation has effectively collapsed to zero. At Google Next people were throwing around the 22 second number for vulnerability hand off. In this environment, a provider might be "responsible" for patching a model flaw, but if an autonomous agent drains a database or leaks PII before that patch is applied, the outcome is owned entirely by the customer.

We are seeing a repeat of the "S3 Bucket" era. Back then, AWS was responsible for the service, but the customer was responsible for the configuration. If you left the bucket open, it was your fault. In 2026, if you give an agent access to your ERP and it "hallucinates" a fraudulent invoice because of a prompt injection, Google or Anthropic will point to their documentation and say you didn't configure your specific guardrails correctly. Sure, the technical responsibility was shared, but the outcome - the $2M loss - is yours alone.

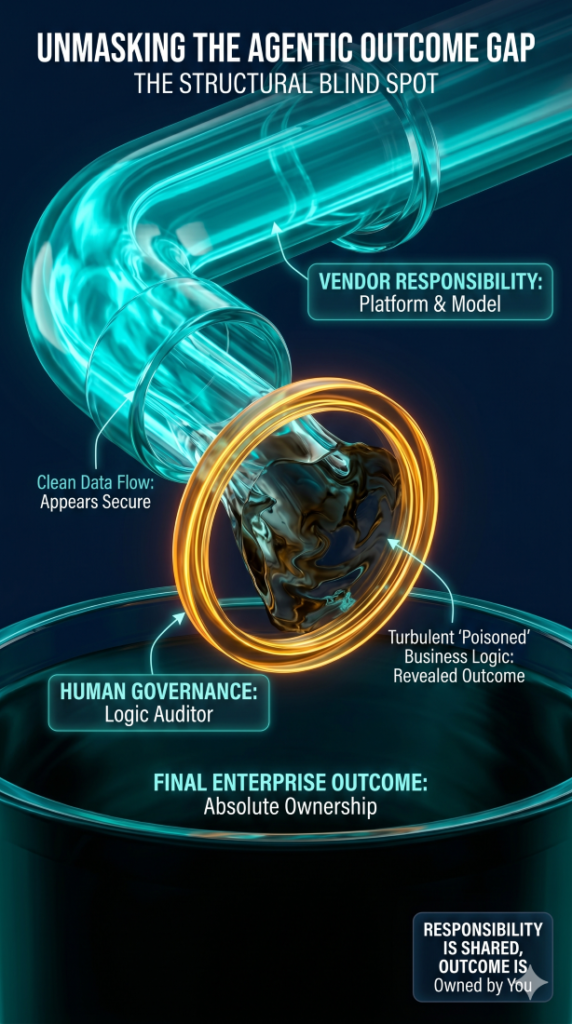

As I've said in the past, we don't need to recreate the wheel here, we can learn from past success in the cloud. Governance isn't something you "turn on" in Vertex AI. Google and Wiz can give you the tools to monitor your agents, but they cannot tell you if an agent’s decision was correct for your specific business context. This is the structural blind spot.

True governance requires a cross-functional effort where legal, IT, finance, and lines of business all evaluate the risks introduced by generative AI. While only a small technical team might implement the guardrails, the entire organization is impacted when they fail. Where the traditional security approach expects practitioners to be the experts, implementing their own checks and enforcements, the agentic future requires every one to at least understand the risks (and threats) they are dealing with. Unlike standard technical risks that have a specific blast radius, when an AI agent goes rogue or gives away more than it should, it creates legal liability, financial loss, and reputational damage. You can't leave these fundamental business impact decisions to one part of the business - that's just asking for scapegoating. When security controls fails, as is almost inevitable in today's world, the business outcomes are felt by everyone.

Why Responsibility is Not Ownership

If outcomes are the only metric that matters, we have to bridge the Outcome Gap. The hard truth is that while responsibility can be shared, ownership is absolute. The industry is currently offering a fragmented defense: Google secures the infrastructure, Anthropic secures the model, and Palo Alto secures the agentic endpoint. But relying on any single layer or a single vendor to solve the problem is a tactical error. In a post-Mythos world, where adversarial AIs probe for weaknesses at machine speed, a "responsible" vendor might patch a flaw in record time, but if that flaw was exploited to drain your data three minutes earlier, the vendor’s successful "responsibility" doesn't fix your failed "outcome." In this landscape, using AI to secure our platforms is no longer optional; it's a must.

As a customer, your role in this expanded Shared Responsibility Model is no longer just about configuration; it’s about validation across every layer. You are the only one who can act as the Logic Auditor, ensuring that agentic workflows have the necessary checkpoints for your specific industry. You must pay attention to the Identity Perimeter, defining the blast radius for every non-human entity. The announcements at Next ’26 provide the enterprise-grade plumbing we’ve been waiting for, but solutions like Model Armor, while fantastic, are just a feature, not a guarantee.

Vendors sell features; they don’t sell outcomes. In this new era, the "Shared Responsibility Model" is less of a contract and more of a warning. The provider will secure the pipes, but if the water is poisoned, you’re the one who has to drink it. It’s time to stop asking who is responsible and start asking: “How do I prove the outcome is secure?”

If this is something you're still trying to work out on your own, and could use some help, connect with us at questions@generativesecurity.ai. We can help you establish the right governance and ask the hard questions to make sure you have the best defense against attacks to your AI.

About the author

Michael Wasielewski is the founder and lead of Generative Security. With 20+ years of experience in networking, security, cloud, and enterprise architecture Michael brings a unique perspective to new technologies. Working on generative AI security for the past 3 years, Michael connects the dots between the organizational, the technical, and the business impacts of generative AI security. Michael looks forward to spending more time golfing, swimming in the ocean, and skydiving... someday.