If you read our blog recapping Google Next ‘25, you would have seen that AI seemed to be the only thing Google was working on. But this year, I’m pretty sure the word “agentic” showed up 20 times more frequently than any other word in the keynote, in any of the session headlines, or in any of the printed material on the floor. And don’t get me wrong, it’s for a good reason – agents have taken over the enterprise narrative. But let’s talk about what all this meant for security. Based on the sessions and the massive noise around the Google-Wiz acquisition, three major themes emerged that every security leader needs to track.

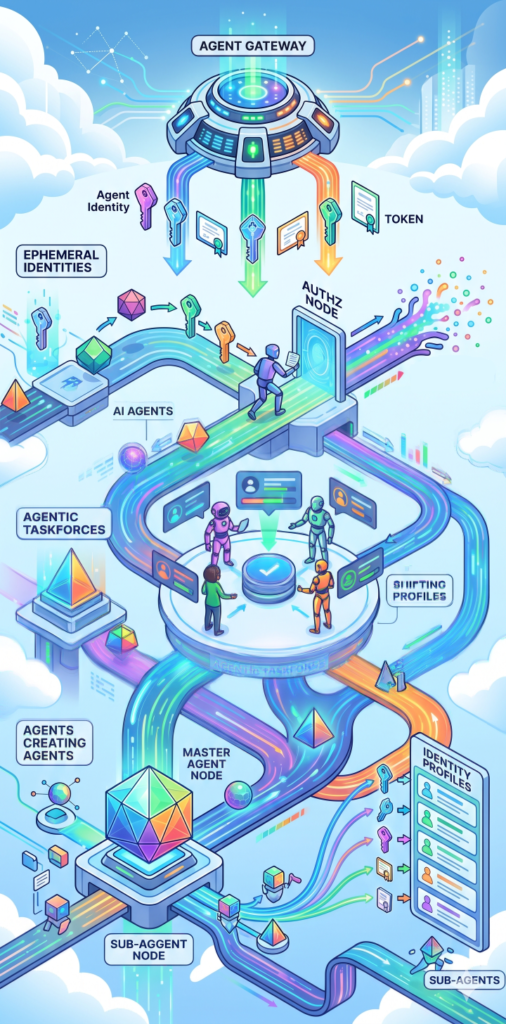

The first major theme would be the shift away from model security instead to agent security. Last year there were lots of conversations around foundational model security and the supporting architecture and data. Threats around data poisoning, data exfiltration, model and system prompts, and guardrails were front and center. Now it seems as if this was being treated as a solved problem. Yes, Google’s Model Armor is fantastic, but as we’ve talked about on this blog it misses a lot of risks beyond basic technology attacks. Even talks with Palo Alto and their customers seemed to treat this as an ongoing but under control issue. I fundamentally disagree. Regardless, the idea of Agent Security has taken its place. So now, the focus was more around identity, permissions, behavioral access, and governance. Agent Gateway is one announcement where I see real value in the solution, but it is only in preview and looks more at the workflow than the model itself. While this is all extremely important, I fear it’s sucking the oxygen out of the room for the other, still unsolved problems as well.

The second major shift was the pivot from external-facing models and chatbots to internal-facing "Agentic Taskforces." As a result, the security conversation was less about protecting against jailbreaks and prompt injections, and more about identity, data connectivity, and confidentiality. If you’ve heard me rant about Googles announcements last year, I really disliked how they implied that agents could have access to internal and external facing data simultaneously without clear identity/cross-talk protection. This year, the idea of identity, permissions, and how to get the right data to the right place at the right time was much more prevalent. Google’s Agent Identity is evidence they saw the problem, but I still think their fix is about 50%. Agents are no longer just service accounts with broad permissions; they are being treated as ephemeral identities. But I have a feeling we’re going to start having explicit AI and Zero Trust conversations By Google Next ‘27.

However, there is a looming gap in the capabilities of identity products, and the reality of identity implementation. While Google is providing the tools for Least Privilege Agents, the reality on the expo floor was different. Every CISO and engineer I spoke to agreed that we have been struggling to manage least-privilege over the last 30 years, and nothing drastic enough has changed to think we’ll do it right managing 10,000 autonomous agents. We are effectively creating a new class of "Shadow AI" where agents are creating other agents, and our legacy IGA (Identity Governance and Administration) tools aren't built for that velocity. There were some interesting conversations about ephemeral identities for agents that covers both AuthN and AuthZ credentials, but it still seems very early.

The third major theme that I saw was not in any of the vendors on the Expo floor, but part of almost every session and conversation around agent security – red teaming. The idea of automated red teaming and the need to Red Team your agents as they are created was echoed by CloudWerx, Palo Alto, Google, and of course Wiz with their announcements including the Red agent. I talked about this in the blog about red teaming going mainstream almost a year ago, and it seems that idea was prescient. While Palo Alto put it as part of their security products, CloudWerx put it as part of their orchestration, and Wiz put it as part of their Agent Defense approach, the idea of red teaming as mandatory step in the process was consistent, even if where in the process was not. Sundar Pichai mentioned that 75% of Google’s new code is now AI-generated. If you are generating code and deploying agents at that scale, manual security reviews are a physical impossibility. Automated red teaming is the only path forward.

To be honest though, I fear this is not what is going to happen in the real world. Too many teams, both development and security, see Red teaming as an optional exercise, and until they are forced to use (and pay for) this, it’s more of the industry selling itself as opposed to real market change.

All told I saw some really cool products, really cool announcements, and I’m looking forward to building more on GCP. I’m especially looking forward to using Agent Gateway to extend near-real-time protection for the complex, multi-session threats we are developing at Generative Security, with no additional resiliency risk. More to come on that soon.

If you want to talk with us about what we saw, what we think the future of gen AI Security at Google, or how to integrate some of these capabilities into your workflows, feel free to reach out at questions@generativesecurity.ai. We look forward to hearing from you and hearing what your experiences were like.

About the author

Michael Wasielewski is the founder and lead of Generative Security. With 20+ years of experience in networking, security, cloud, and enterprise architecture Michael brings a unique perspective to new technologies. Working on generative AI security for the past 3 years, Michael connects the dots between the organizational, the technical, and the business impacts of generative AI security. Michael looks forward to spending more time golfing, swimming in the ocean, and skydiving... someday.