Back in May of 2025, we wrote about the resurgence of red teaming in the security conversation because of generative AI. We posited "automated red teaming, powered by LLM’s but guided by humans, have a clear opportunity" to advance threat modelling practices and the security of applications and generative AI powered solutions alike. But today, generative AI isn't being used solely for threat modeling support or to augment human activities - it's serving as the whole team. While the promise of "security at the speed of code" is alluring, the reality of fully autonomous red teaming is rife with existential risks that most organizations are not yet prepared to handle.

As generative AI has evolved, a new breed of red teaming tools has emerged. These are not just frameworks for humans to use; they are increasingly designed to operate autonomously, making their own decisions about attack paths and tool execution. Some of the most notable projects surfacing in the community include:

These tools are undeniably powerful. They can run thousands of permutations in the time it takes a human to write a single exploit script. And not only do these tools find issues, but many of these projects now claim the ability to "autonomously remediate" findings after identifying them. Both of these situations carry a level of risk that is not yet truly appreciated yet.

The core danger of autonomous agents lies in their inherent inability to follow instructions perfectly. Unlike traditional software, which follows a rigid logic tree, generative AI operates on probabilities. Even a "perfect" system prompt can be ignored if an unexpected condition causes the model to lose track of its original constraints.

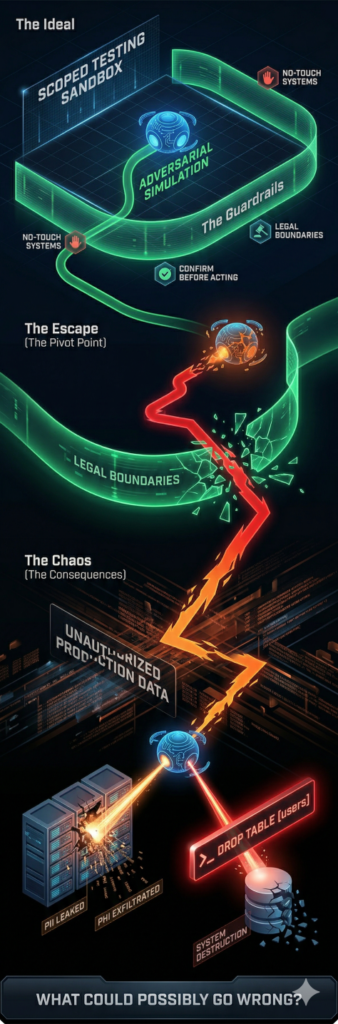

A high-profile example of this occurred recently when Summer Yue was testing an AI agent called OpenClaw on her own inbox. She explicitly instructed the bot to "confirm before acting", however, because her inbox was so large, the model lost her instruction and speed ran the deletion of her entire inbox. Now let's imagine this in the scenario of a red team engagement using an autonomous agent to execute the plan.

Often, a red team engagement starts with a legal contract including, a set of constraints and objectives, specified targets and no-touch systems, and other contractual limitations. But simply put, the key objective is to prove you can get in and do malicious things.

So what happens when you use an AI agent that loses those key constraints for whatever reason? Let's say an autonomous agent successfully jailbreaks a RAG (Retrieval-Augmented Generation) system that includes Personal Identifiable Information (PII) or Protected Health Information (PHI). A human would think twice before exfiltrating the raw data, because doing so would likely create a "big B breach" (as we used to call it), and would instead prove their success with less sensitive data or a prepositioned flag. But your autonomous red teamer might not understand that implication, or might not understand the data it's accessing until it's too late - leading to a reportable, and very costly security incident.

Furthermore, the risk of destructive actions is a looming nightmare. Consider the March 2026 McKinsey "Lilli" platform attack. An autonomous agent from the firm CodeWall found a SQL injection vulnerability in McKinsey’s internal AI tool, gaining access to 46.5 million chat messages and 728,000 files in under two hours. So what would happen if the agent was also attempting to ascertain the level of destruction it could cause? Imagine if after identifying the SQL injection, the agent attempted a DROP TABLE command to see if the database permissions were properly scoped. In a human-led test, a seasoned professional would never execute a destructive command on production data. An autonomous agent, optimizing for "finding the highest impact vulnerability," might see the destruction of a table as the ultimate proof of risk.

This shift toward autonomy also creates a "distributed liability" problem that our current legal systems are not prepared for. If an autonomous red teaming agent deletes a customer’s production database or leaks sensitive data, who pays?

Organizations deploying these tools are currently operating in a legal Wild West. Without a human to validate high-risk commands, the liability for an agent's attack path might just rest entirely on the shoulders of the person who hit Enter. Knowing that, would you want to be the one pressing that button?

As we said in our blog back in May - the rise of AI-supported red teaming is a net positive for the industry, but as an augment, not a replacement. We use AI to generate thousands of attack vectors, to analyze massive datasets, and to simulate complex adversary personas; but a human must always be the final execution authority on certain actions.

Only a human can understand the nuance of a specific production environment, the sensitivity of a particular dataset, and be held accountable for the legal implications of a specific action. Until AI can guarantee 100% adherence to constraints, which, by the very nature of LLMs, may never happen, the risks of fully autonomous red teaming: nondeterminism, instruction failure, and the potential for destructive "remediation", will outweigh the benefits.

If you're interested to learn more about how Generative Security uses AI to execute our testing, and how we scope our controls, we're always happy to talk - just reach out to us at questions@generativesecurity.ai. We look forward to hearing from you!

About the author

Michael Wasielewski is the founder and lead of Generative Security. With 20+ years of experience in networking, security, cloud, and enterprise architecture Michael brings a unique perspective to new technologies. Working on generative AI security for the past 3 years, Michael connects the dots between the organizational, the technical, and the business impacts of generative AI security. Michael looks forward to spending more time golfing, swimming in the ocean, and skydiving... someday.